Everytime I met Dr Leanne Hirshfield, I am impressed by her energy and passion with the fNIRS technology. It was a pleasure listening to her talking about her latest research. When we met last time in a Starbucks in the bay area in June, 2019, she told me something I never heard of – remote fNIRS.

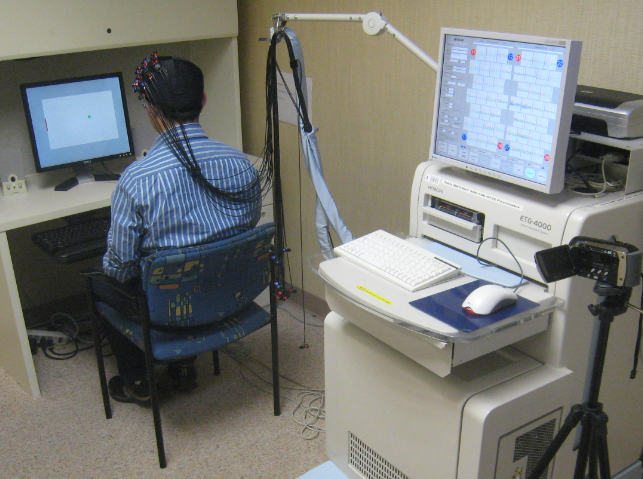

So far, every fNIRS device requires the optodes to be in direct contact with the scalp. Before any measurement, we have to spend at least 5-10 minutes to put the cap on a participant’s head, check the signal quality, and maybe separate hair by a small stick. It’s a lucky day if we get good contact just by one try; more than often, we have to repeat the procedures above to ensure most optodes are in good contact with the scalp. It’s definitely not a pleasant experience.

On the other hand, the cap and optodes often induce pain to the participants if wearing for more than 20 minutes. This is particularly bad for young children or patients who suffer from mental disorders as they are less tolerant to the pain.

That being said, it has never occurred to my mind that there might be a totally different technology which eliminates the pain – until Leanne told me.

What is remote fNIRS?

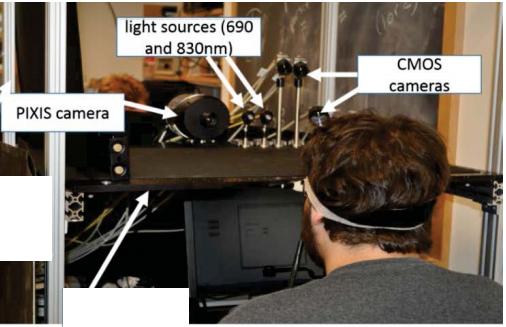

In a nutshell, remote fNIRS uses devices to send and receive infrared light through air. It’s like taking a photo with a smart phone where physical contact with the subject is not necessary. Let’s take a look at the remote fNIRS setup Leanne did:

In this setup, the light source is ThorLabs MCLS-1 Multiple Channel Laser (690 and 830nm). The detector is the PIXIS camera. A much cheaper and smaller detector, CMOS camera, is also used to compare with PIXIS camera.

No wire! If this is successful, we can imagine it can be used in a much wider applications – patients with less tolerance to pain, long hour of study (e.g. sleep), attaching to virtual reality devices easily, and even measuring many (say a few hundred) subjects at the same time – just name a few examples.

Of course, the key question is, is the signal measured reliable?

To this end, Leanne’s team did 3 validation experiments comparing the signal collected by ISS, a commercial fNIRS device (with wire), and the signal collected by remote fNIRS:

- Arm blood occlusion

- Brain, breath holding

- Brain, memory workload

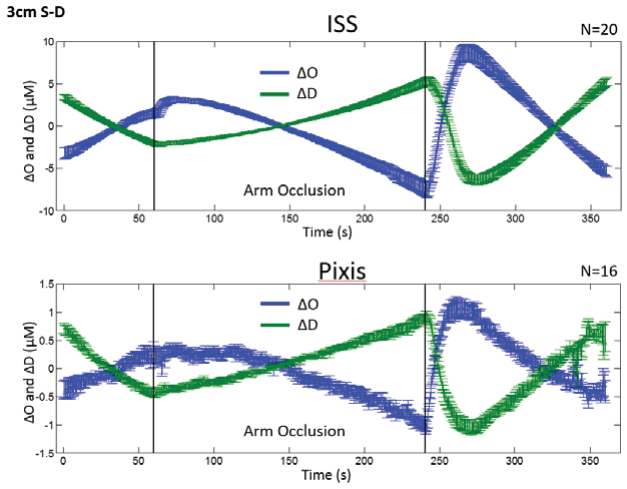

The result is promising. Let’s take a look at the arm blood occlusion experiment. In the figure below, the top panel shows the oxy and deoxy-Hb change measured by ISS, and the lower panel shows the same signal from the remote fNIRS. It’s visually clear that the two measurement matches each other.

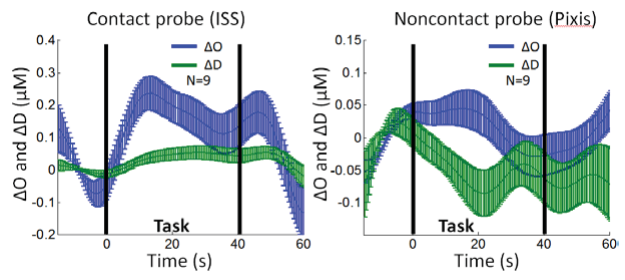

Let’s see the result from the memory workload experiment. The measure from the remote fNIRS is more noisy, but still the overall trend is similar.

For the other validation experiment (breath holding), you may read Leanne’s own writing (link at bottom).

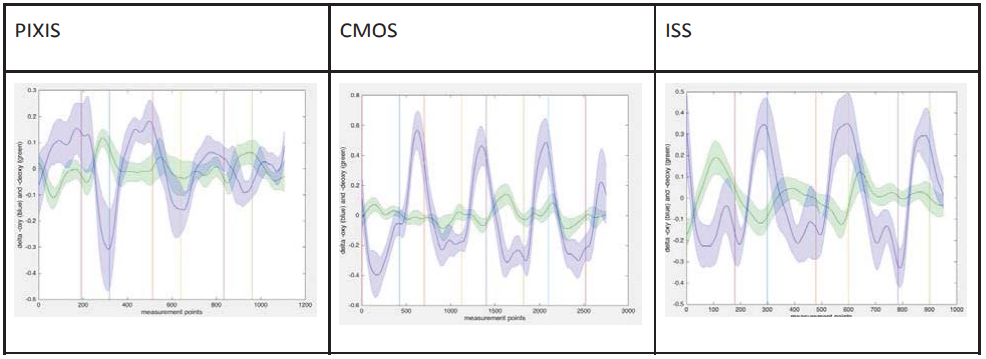

What about the cheaper and smaller CMOS camera detector? Leanne aldo did 3 experiments. Here I will just show one:

I would say the signal from the CMOS detector is similar to the signal from ISS; it might be even better than the PIXIS detector.

The validation result is promising and I am very excited about the future of remote fNIRS. There are a few questions still in my mind. So I did a brief interview with Leanne. And here are her answers:

- When do you think a commercial remote fNIRS device will be available? Will it be cheaper than current fNIRS devices?

Leanne: Great question! As a researcher based in the human-computer interaction (HCI) domain, my focus is primarily on pushing the envelope through advancements in basic science, so I don’t have any experiences pushing products to market. My hope is that we’ll see remote fNIRS in the next 5 years, in much the same way that we’re seeing remote measurements of other biometric information, such as heartrate. It certainly could end up being less expensive than traditional fNIRs devices. We created and evaluated two versions of the remote fNIRS. The first version used an expensive specialized camera to act as a detector. The camera was very expensive and designed to have high specificity for capturing the wavelengths of 690nm and 830nm. The second version used two off-the-shelf CCD cameras, where each camera was configured to act as a detector for one of the two fNIRS wavelengths (around 690 and 830nm). The second version was much less expensive and it performed well in our validation studies. Light sources are pretty cheap. Its the detectors that cost so much in fNIRS systems. So the fact that we can mimic light detectors with off-the-shelf CCD cameras certainly suggests that remote fNIRS devices could indeed be less expensive than their head-mounted counterparts. - What are the top two applications you think remote fNIRS will have the biggest potential?

Leanne: There are many applications where having a non-contact way to measure hemodynamic changes in the brain would be useful. It could be helpful to take measurements of sensitive populations (children, babies, people with psychiatric challenges, alzheimer’s etc.) in the medical domain, in ways that don’t require contact with the head. And as a researcher in HCI, we do a lot of work these days building adaptive systems to improve human performance. So you could imagine a pilot, driver of a car, or person at his/her computer– all having their prefrontal cortex measured remotely. That region is a particularly nice spot for measuring things relating to cognitive workload. So one could envision intelligent systems adapting in real time to the user’s level of workload, for example. These are the types of things we look at in our lab– Can we build accurate models to predict workload in real-time using fNIRS data, and if we can get those accurate predictions, how might an intelligent system adapt? Fun stuff! - What is the biggest challenge in this technology?

Leanne: Right now we’re working on tackling the problem of motion. Basically things break down the moment people move their heads. But we have some really great minds working on that problem, with a plan that remote-fNIRS devices in the future can correct for small head movements. - If a company intends to manufacture a remote fNIRS device, how should they contact you regarding to the patent?

Leanne: They’re welcome to shoot me an email at [email protected]

Dr Leanne Hirshfield’s writing is from https://apps.dtic.mil/sti/pdfs/AD1026048.pdf, which can be downloaded here:

Dr Leanne Hirshfield’s remote fNIRS technology is patented. The patent can be found here.

If you want to follow Dr Leanne Hirshfield’s new publications, you may enter the following two keywords to Stork:

1. Leanne Hirshfield[au]

2. "remote fNIRS" OR "remote NIRS"