As researchers, we all have a dream: it would be fantastic if AI could write papers one day! This sounds like a science fiction, but after I used Stork‘s new feature “AI Creative Abstract“, I feel that this day is coming soon.

In order to test how powerful AI is, I thought of an interesting topic – what is the brain mechanism of deceptive behavior between humans and their pet dogs. This is a topic that has not been studied so far. No one has scanned the brains of a person and his pet dog at the same time, and no articles have been published. I had this idea for a while, but it’s still a vague idea. I don’t know how to implement it and what the result will be. So I am interested in what AI would write. So I entered:

Deception between human and pet dog, an fNIRS hyperscanning study

After I clicked the “Start” button, the AI seemed to think for a few seconds, and then words appeared continuously:

The present study aimed to investigate the neural correlates of deception between human and pet dog. The participants were asked to deceive their dogs by giving a hand signal indicating that they would give food, but then not doing so. We measured functional near-infrared spectroscopy signals from both the deceiver (human) and the deceived (dog). Our results showed that there was no significant difference in oxyhemoglobin concentration changes between deception and non-deception conditions for either humans or dogs. However, we found significantly greater deoxyhemoglobin concentrations during deception compared with non-deception in both humans and dogs at bilateral temporal areas, suggesting increased brain activities related to emotional processing during deceptive interaction with pets. Our study provides evidence for shared neural mechanisms underlying social interactions involving trustful relationships such as those between human-dog dyads.

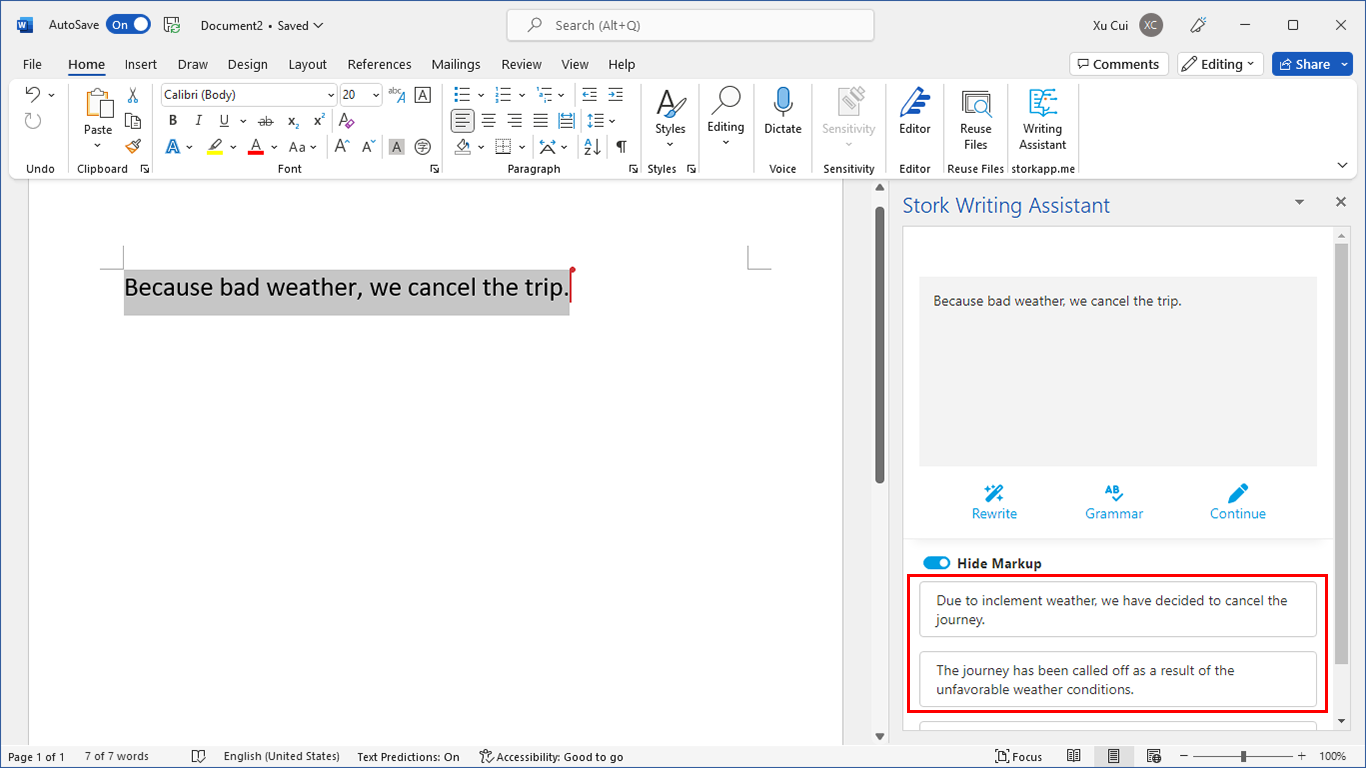

I read while it writes, and I am shocked. In terms of language, the abstract is written in native English without spelling and grammatical errors; logically, it is clear and fluent, and it matches well with the subject I gave. Most importantly, it produces something creative that I didn’t expect. For example, it tells me how to design the “deception” experiments, and which brain areas are expected to activate.

I couldn’t stop, so I asked AI to write the second version on the same topic:

Interactions between humans and their pets are an important part of our everyday lives. Dogs are known as sensitive and loyal companions. The present study investigated the neural correlates of deception in dogs and their human companions. Hyperscanning was used to investigate the activation of the left temporal pole, the right temporal pole, and the right prefrontal cortex in dogs and their human partners during a cooperative communicative task in which the dog had to deceive its human partner. Dogs and their partners were equipped with near-infrared spectroscopy (NIRS) hyperscanning caps to measure concurrent changes in oxy- and deoxy-hemoglobin concentrations in the temporal cortex. We found that when dogs looked at their human partner, activation was observed in the left temporal pole, the right temporal pole, and the right prefrontal cortex, whereas activation was not observed in the human temporal pole or the human prefrontal cortex. Our findings suggest that human-to-dog deception may be associated with increased activation in the right temporal pole and the right prefrontal cortex, whereas dog-to-human deception is associated with increased activation in the left temporal pole and the right prefrontal cortex. Our findings may provide new insights into the evolutionary history of human-dog communication and the role of the temporal pole and the prefrontal cortex in social cognition.

This version is also inspiring. The first two sentences are introductions, and the logic is clear. I probably can use them when I write a formal article. Then it is the purpose of the experiment and the brain area to be scanned. The results of the experiment follow, listing which brain areas are active. Finally, the significance of this research is briefly discussed.

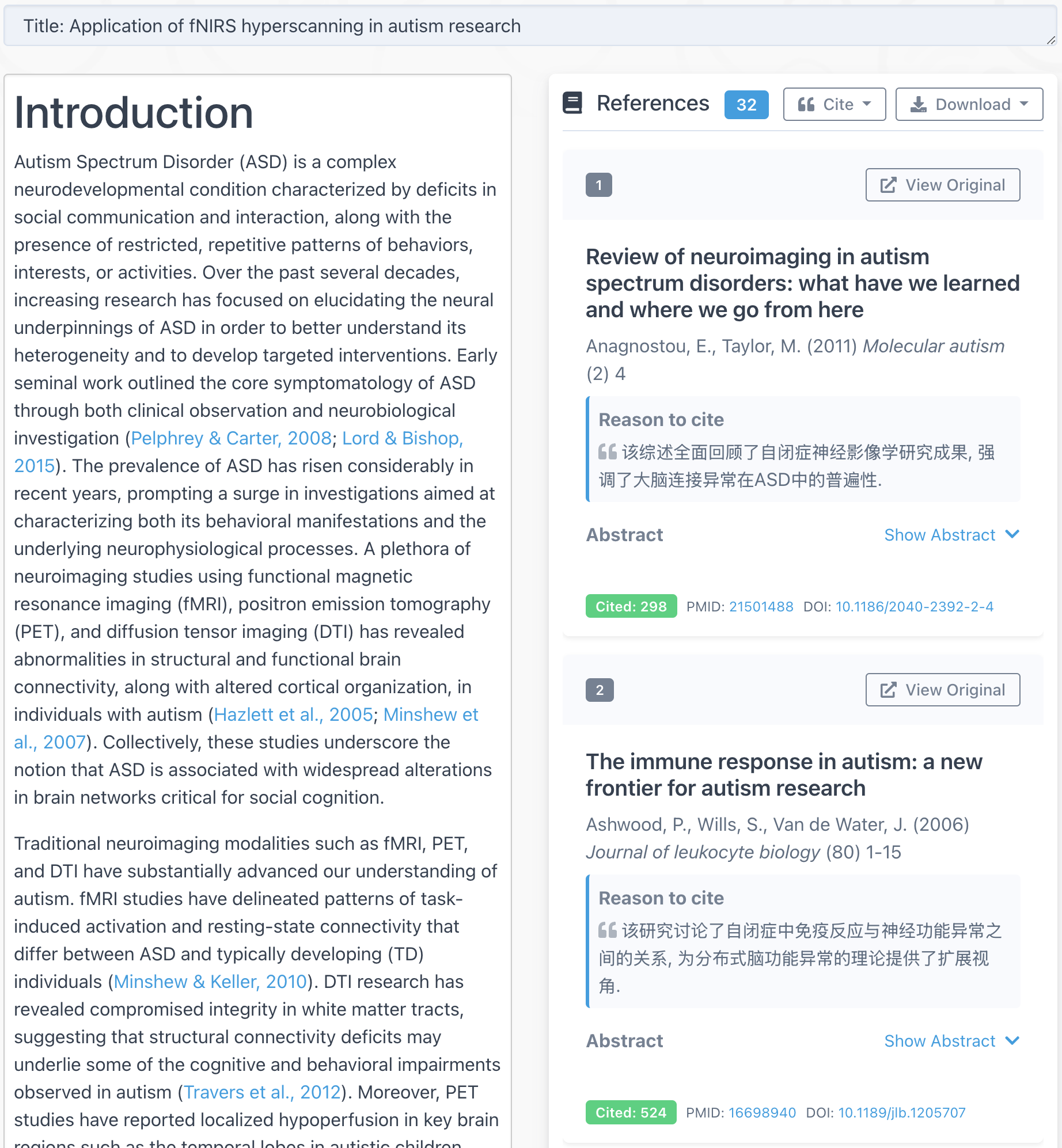

I asked AI to write dozens of abstracts in different fields such as cognitive science, material science, physics, philosophy, etc. AI wrote sensible abstracts in most cases. I felt like I was brainstorming with a knowledgeable person. Even when I entered something very vague, AI can write something concrete, and I can always find something new from what it writes.

Of course, I understand that these abstracts are all “made up” by AI based on its massive reading. Some statements are not facts, but it still provides a lot of ideas for future research.

At this point, I have mixed feelings. The main part is excitement: AI offers us more tools and more ideas when doing research. However, I am also worried that if AI can write complete papers in the future, what use are we as researchers? Do we just verify the experiment results proposed by AI? Also, who can distinguish whether a paper is written by AI or by real researchers? If it can’t be distinguished, will academic journals be flooded with papers written by AI with untrue results? Like every tool we invented, in the end we don’t know whether we are using the tool or becoming its slave. If we can face these potential problems early on, we have a greater chance to create tools that serve us instead of harming us.

Awesome!

Hope you like it!